Missing model file

#1

by

rasmusjs - opened

Hi!

When running this GGUF with Ollama, it looks like the model is missing a proper chat template / Modelfile. ollama show --modelfile returns only TEMPLATE {{ .Prompt }}, and the model produces repetitive, non-instruction-following output (example below).

After applying the Gemma-style template from the larger Borealis model (<start_of_turn>user ... <start_of_turn>model ... <end_of_turn>), the 270M model behaves like a normal instruct model and answers correctly.

So the GGUF seems to be missing the correct instruction/chat template metadata needed for chat inference.

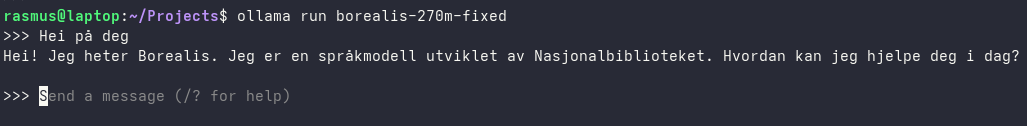

Without model file:

This is the chat template I used, that fixed the issue:

FROM hf.co/NbAiLab/borealis-270m-instruct-preview-gguf:Q8_0

TEMPLATE """{{- $systemPromptAdded := false }}

{{- range $i, $_ := .Messages }}

{{- $last := eq (len (slice $.Messages $i)) 1 }}

{{- if eq .Role "user" }}<start_of_turn>user

{{- if (and (not $systemPromptAdded) $.System) }}

{{- $systemPromptAdded = true }}

{{ $.System }}

{{ end }}

{{ .Content }}<end_of_turn>

{{ if $last }}<start_of_turn>model

{{ end }}

{{- else if eq .Role "assistant" }}<start_of_turn>model

{{ .Content }}{{ if not $last }}<end_of_turn>

{{ end }}

{{- end }}

{{- end }}"""

PARAMETER stop <end_of_turn>

PARAMETER top_k 64

PARAMETER top_p 0.95

This should be fixed now 😀

versae changed discussion status to

closed