SAIDResearch

LAM

SAID‑LAM‑v1

LAM (Linear Attention Models) — a new family beyond semantic transformers.

SAID‑LAM‑v1 is Linear Attention Memory.

PHILOSOPHY: DETERMINISM OVER PROBABILITY

"The answer IS X. Because I Said so." — At ANY scale

Model Details

| Property | Value |

|---|---|

| Model Category | LAM (Linear Attention Models) — SAID-LAM-v1: Linear Attention Memory |

| Parameters | 23,848,788 |

| Embedding Dimension | 384 |

| Max Context Length | 32,768 tokens |

| Memory Usage | ~95 MB |

| Complexity | O(n) linear — time AND memory |

| Framework | Pure Rust (Candle) — no PyTorch required |

| Package Size | ~6 MB binary + 92 MB weights (auto-downloaded) |

| License | Apache 2.0 (weights) / Proprietary (code) |

Performance

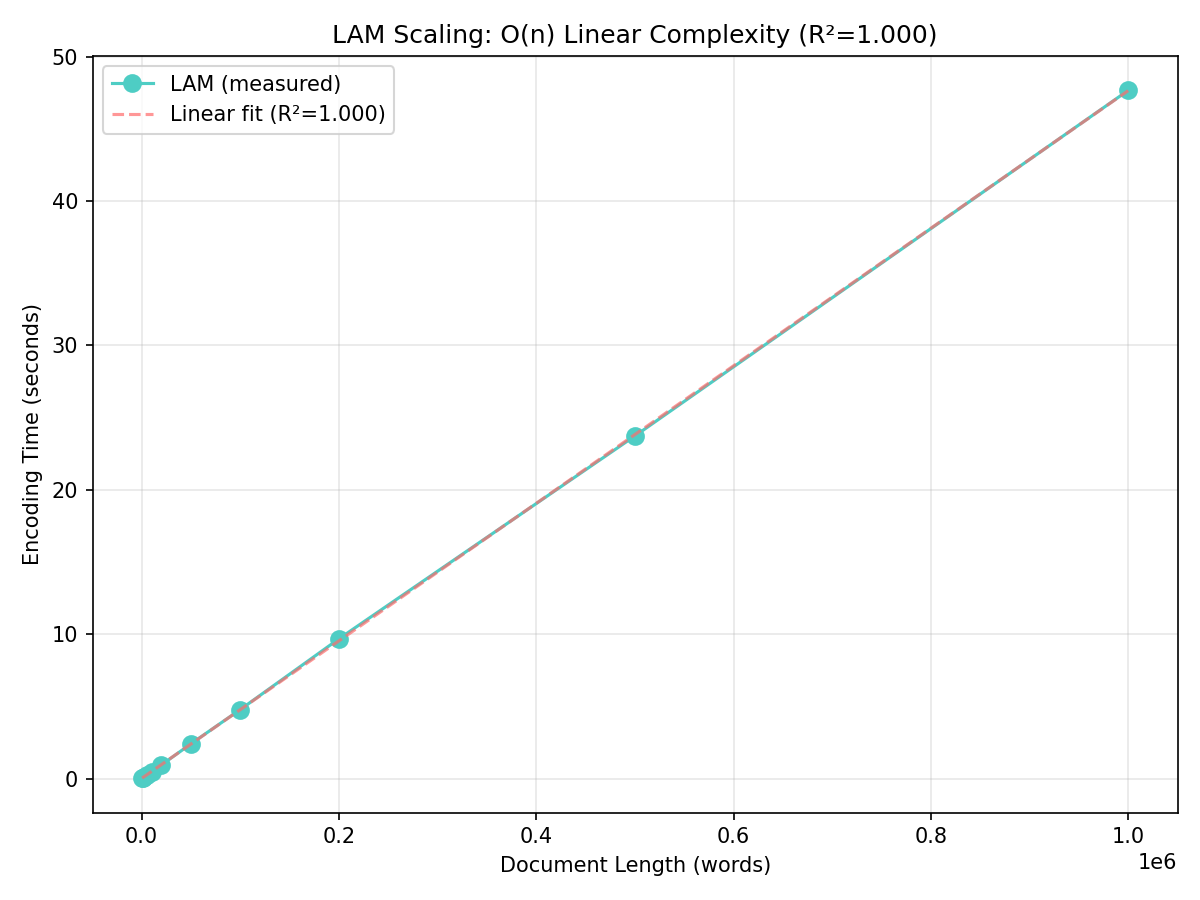

O(n) Linear Scaling

LAM scales linearly with input length — empirically validated up to 1M words with R²=1.000, with memory growth from ~0 MB at small inputs up to ~15 MB at 1M words:

STS-B Semantic Quality

Spearman r = 0.8181 on the STS-B test set (1,379 sentence pairs):

MTEB LongEmbed Benchmarks

Combined LongEmbed score (SAID-LAM-v1, average over all six tasks): ~91.0%.

| Task | Score |

|---|---|

| LEMBNeedleRetrieval | 100.00% |

| LEMBPasskeyRetrieval | 100.00% |

| LEMBNarrativeQARetrieval | 69.93% |

| LEMBSummScreenFDRetrieval | 96.59% |

| LEMBQMSumRetrieval | 85.76% |

| LEMBWikimQARetrieval | 93.98% |

LongEmbed SOTA comparison

| Task | SAID-LAM-v1 (23M) | Global SOTA |

|---|---|---|

| LEMBNeedleRetrieval | 100.00% | 100.00% |

| LEMBPasskeyRetrieval | 100.00% | 100.00% |

| LEMBNarrativeQARetrieval | 69.93% | 66.10% |

| LEMBSummScreenFDRetrieval | 96.59% | 99.10% |

| LEMBQMSumRetrieval | 85.76% | 83.70% |

| LEMBWikimQARetrieval | 93.98% | 91.20% |

Install

pip install said-lam

CUDA (GPU) wheels are published under a separate PyPI project:

pip install said-lam-gpu

To upgrade an existing installation to the latest version:

pip install --upgrade said-lam

# or for the GPU version:

pip install --upgrade said-lam-gpu

To install a specific older version:

pip install said-lam==1.0.2

# or for the GPU version:

pip install said-lam-gpu==1.0.2

To uninstall the package:

pip uninstall said-lam

# or for the GPU version:

pip uninstall said-lam-gpu

Note: Both packages use the exact same import said_lam namespace. Please ensure you only have one of them installed at a time to avoid conflicts.

On first use, model weights (~92 MB) are automatically downloaded from HuggingFace and cached locally. The pip package itself is only ~6 MB (compiled Rust binary — weights are NOT bundled).

Drop-in sentence-transformers Replacement

LAM is a drop-in replacement for sentence-transformers. Same API, same output format.

Before (sentence-transformers):

from sentence_transformers import SentenceTransformer

model = SentenceTransformer("all-MiniLM-L6-v2")

embeddings = model.encode(["Hello world", "Semantic search"])

# embeddings.shape == (2, 384), float32, L2-normalized

similarity = embeddings[0] @ embeddings[1]

After (LAM):

from said_lam import LAM

model = LAM("SAIDResearch/SAID-LAM-v1")

embeddings = model.encode(["Hello world", "Semantic search"])

# embeddings.shape == (2, 384), float32, L2-normalized

similarity = embeddings[0] @ embeddings[1]

Same output format, same shapes, same downstream compatibility. Everything that works with sentence-transformers embeddings (FAISS, ChromaDB, Pinecone, numpy dot product) works with LAM embeddings.

| Property | sentence-transformers | LAM |

|---|---|---|

| Output | (N, 384) ndarray, float32 | (N, 384) ndarray, float32 |

| L2-normalized | Yes (default) | Yes (default) |

| Cosine sim = dot product | Yes | Yes |

| Max tokens | 512 | 12K (encode) / 32K (SCA) |

| Complexity | O(n²) attention | O(n) linear |

| Framework | PyTorch (~2 GB) | Rust (~6 MB) |

| Memory at 1M tokens (no chunking) | OOM / impractical | ~15 MB |

Usage

FREE Tier — Embeddings (up to 12K tokens)

from said_lam import LAM

model = LAM("SAIDResearch/SAID-LAM-v1")

embeddings = model.encode(["Hello world", "Semantic search is powerful"])

# embeddings.shape == (2, 384)

# Cosine similarity (L2-normalized by default)

similarity = embeddings[0] @ embeddings[1]

print(f"Similarity: {similarity:.4f}")

BETA SCA (SAID Crystalline Attention) — MTEB testing only

BETA SCA (SAID Crystalline Attention) is activated for MTEB testing only, to enable perfect LongEmbed context retrieval (e.g. LEMBNeedleRetrieval, LEMBPasskeyRetrieval). Use the MTEB evaluation flow; no signup or activation required for benchmarking.

Common Patterns

Similarity Between Texts

Embeddings are L2-normalized — cosine similarity is just a dot product:

emb = model.encode(["The cat sat on the mat", "A kitten rested on the rug"])

similarity = float(emb[0] @ emb[1])

print(f"Similarity: {similarity:.4f}") # ~0.5761

Batch Similarity Matrix

import numpy as np

queries = ["How is the weather?", "What time is it?"]

candidates = ["Is it raining today?", "Do you have the time?", "Nice shoes"]

emb_q = model.encode(queries) # (2, 384)

emb_c = model.encode(candidates) # (3, 384)

sim_matrix = emb_q @ emb_c.T # (2, 3)

Semantic Search Over a Corpus (FREE Tier)

import numpy as np

corpus = ["Python is a language", "The Eiffel Tower is in Paris",

"ML uses neural networks", "Speed of light is 299792458 m/s"]

corpus_emb = model.encode(corpus)

query_emb = model.encode(["fastest thing in physics"])

scores = (query_emb @ corpus_emb.T)[0]

ranked = np.argsort(scores)[::-1]

for i in ranked:

print(f" {scores[i]:.4f} {corpus[i]}")

Matryoshka Dimensionality Reduction

emb_128 = model.encode(["Hello world"], output_dim=128) # (1, 128)

emb_64 = model.encode(["Hello world"], output_dim=64) # (1, 64)

# Automatically truncated and re-normalized to unit length

Example impact on STS12 (cosine main_score, GPU):

| dim | STS12 score | rel. to 384d |

|---|---|---|

| 384 | 0.7493 | 100.0% |

| 256 | 0.7472 | 99.7% |

| 128 | 0.7459 | 99.6% |

| 64 | 0.7327 | 97.8% |

Token Limits

encode(): Up to 12,000 tokens per text. Returns embeddings for your RAG.index()+search(): Up to 32,768 tokens per text (MTEB BETA SCA — LongEmbed/MTEB testing group only). SCA streaming — no embeddings, perfect recall.

encode() — returns one embedding per input text, capped at 12K tokens:

# Each text gets one embedding — long texts are chunked at 12K tokens

embeddings = model.encode(["short text", "very long text..."]) # (2, 384)

# Use output_dim for smaller embeddings (Matryoshka)

embeddings = model.encode(["short text", "very long text..."], output_dim=128) # (2, 128)

Long documents?

encode()caps at 12K tokens. For LongEmbed benchmarks (MTEB BETA SCA testing only),index()+search()support up to 32K tokens via SCA.

MTEB Evaluation

One model, one class: use the same LAM with mteb.evaluate() (LAM implements the global MTEB encoder protocol).

from said_lam import LAM

import mteb

model = LAM("SAIDResearch/SAID-LAM-v1")

tasks = mteb.get_tasks(tasks=["LEMBNeedleRetrieval", "LEMBPasskeyRetrieval"])

results = mteb.evaluate(model=model, tasks=tasks)

API Reference

LAM(model_name_or_path, device)

| Parameter | Default | Description |

|---|---|---|

model_name_or_path |

"SAIDResearch/SAID-LAM-v1" |

Hugging Face model ID to load (default: SAIDResearch/SAID-LAM-v1), or a local directory path pointing to the model files |

device |

None (auto) |

Auto-selects CUDA GPU if available, otherwise CPU |

Core Methods

| Method | Tier | Description |

|---|---|---|

model.encode(sentences, output_dim=None) |

FREE+ | Encode to embeddings (384, 256, 128, or 64 dims) |

model.index(doc_id, text) |

MTEB | Index a document for search (benchmarks) |

model.search(query, top_k) |

MTEB | Retrieve documents by query (benchmarks) |

model.truncate_embeddings(emb, dim) |

FREE+ | Matryoshka truncation (64/128/256) |

model.clear() |

MTEB | Clear indexed documents (benchmarks) |

model.stats() |

FREE+ | Model statistics |

Tier System

| Tier | encode() | (SCA) | How to Get | Features |

|---|---|---|---|---|

FREE |

12K | — | Default | encode() only — embeddings for RAG |

MTEB |

12K | 32K | Auto-detected | SCA for LongEmbed retrieval (benchmarks only) |

LICENSED |

32K | 32K | Coming soon | + persistent storage + cloud sync |

INFINITE |

Unlimited | Unlimited | Coming soon | Oracle mode |

GPU Support

CPU wheels are installed by default. For GPU acceleration:

# Build from source with CUDA (Linux)

pip install maturin

maturin build --release --features cuda

# Metal (macOS Apple Silicon)

maturin build --release --features metal

Model Files

| File | Size | Description |

|---|---|---|

model.safetensors |

92 MB | Model weights (SafeTensors format) |

config.json |

1 KB | Model configuration |

tokenizer.json |

467 KB | Tokenizer vocabulary |

tokenizer_config.json |

350 B | Tokenizer settings |

vocab.txt |

232 KB | WordPiece vocabulary |

special_tokens_map.json |

112 B | Special token definitions |

Citation

@misc{said-lam-v1,

title={SAID-LAM-v1: Linear Attention Memory},

author={SAIDResearch},

year={2026},

url={https://saidhome.ai},

note={23.85M parameter embedding model with O(n) linear complexity.

384-dim embeddings, 32K context window, 100% NIAH recall.

Distilled from all-MiniLM-L6-v2. Pure Rust (Candle) implementation.}

}

Links

- Organization: SAIDResearch

- PyPI: said-lam

- Distilled From: all-MiniLM-L6-v2

- Framework: Candle (Hugging Face Rust ML)

- Contact: research@saidhome.ai

- Downloads last month

- 54

Model tree for SAIDResearch/SAID-LAM-v1

Base model

sentence-transformers/all-MiniLM-L6-v2Evaluation results

- main_score on mteb/LEMBNeedleRetrievalself-reported1.000

- main_score on mteb/LEMBPasskeyRetrievalself-reported1.000

- main_score on mteb/LEMBNarrativeQARetrievaltest set self-reported0.699

- main_score on mteb/LEMBSummScreenFDRetrievalvalidation set self-reported0.966

- main_score on mteb/LEMBQMSumRetrievaltest set self-reported0.858

- main_score on mteb/LEMBWikimQARetrievaltest set self-reported0.940

- main_score on mteb/BIOSSEStest set self-reported0.741

- main_score on mteb/SICK-Rtest set self-reported0.736

- main_score on mteb/STS12test set self-reported0.749

- main_score on mteb/STS13test set self-reported0.857

- main_score on mteb/STS14test set self-reported0.828

- main_score on mteb/STS15test set self-reported0.878

- main_score on mteb/STSBenchmarktest set self-reported0.818

- main_score on mteb/STS17test set self-reported0.871

- main_score on mteb/STS22.v2test set self-reported0.560

-Linear_Complexity-0f0f0f?style=flat-square)