Instructions to use openbmb/BitCPM4-0.5B-GGUF with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use openbmb/BitCPM4-0.5B-GGUF with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="openbmb/BitCPM4-0.5B-GGUF") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoModel model = AutoModel.from_pretrained("openbmb/BitCPM4-0.5B-GGUF", dtype="auto") - llama-cpp-python

How to use openbmb/BitCPM4-0.5B-GGUF with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="openbmb/BitCPM4-0.5B-GGUF", filename="BitCPM4-0.5B-q2_k_s.gguf", )

llm.create_chat_completion( messages = [ { "role": "user", "content": "What is the capital of France?" } ] ) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use openbmb/BitCPM4-0.5B-GGUF with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S # Run inference directly in the terminal: llama-cli -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S # Run inference directly in the terminal: llama-cli -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S # Run inference directly in the terminal: ./llama-cli -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S # Run inference directly in the terminal: ./build/bin/llama-cli -hf openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

Use Docker

docker model run hf.co/openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

- LM Studio

- Jan

- vLLM

How to use openbmb/BitCPM4-0.5B-GGUF with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "openbmb/BitCPM4-0.5B-GGUF" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "openbmb/BitCPM4-0.5B-GGUF", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

- SGLang

How to use openbmb/BitCPM4-0.5B-GGUF with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "openbmb/BitCPM4-0.5B-GGUF" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "openbmb/BitCPM4-0.5B-GGUF", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "openbmb/BitCPM4-0.5B-GGUF" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "openbmb/BitCPM4-0.5B-GGUF", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Ollama

How to use openbmb/BitCPM4-0.5B-GGUF with Ollama:

ollama run hf.co/openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

- Unsloth Studio new

How to use openbmb/BitCPM4-0.5B-GGUF with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for openbmb/BitCPM4-0.5B-GGUF to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for openbmb/BitCPM4-0.5B-GGUF to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for openbmb/BitCPM4-0.5B-GGUF to start chatting

- Docker Model Runner

How to use openbmb/BitCPM4-0.5B-GGUF with Docker Model Runner:

docker model run hf.co/openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

- Lemonade

How to use openbmb/BitCPM4-0.5B-GGUF with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull openbmb/BitCPM4-0.5B-GGUF:Q2_K_S

Run and chat with the model

lemonade run user.BitCPM4-0.5B-GGUF-Q2_K_S

List all available models

lemonade list

GitHub Repo | Technical Report

👋 Join us on Discord and WeChat

What's New

- [2025.06.06] MiniCPM4 series are released! This model achieves ultimate efficiency improvements while maintaining optimal performance at the same scale! It can achieve over 5x generation acceleration on typical end-side chips! You can find technical report here.🔥🔥🔥

MiniCPM4 Series

MiniCPM4 series are highly efficient large language models (LLMs) designed explicitly for end-side devices, which achieves this efficiency through systematic innovation in four key dimensions: model architecture, training data, training algorithms, and inference systems.

- MiniCPM4-8B: The flagship of MiniCPM4, with 8B parameters, trained on 8T tokens.

- MiniCPM4-0.5B: The small version of MiniCPM4, with 0.5B parameters, trained on 1T tokens.

- MiniCPM4-8B-Eagle-FRSpec: Eagle head for FRSpec, accelerating speculative inference for MiniCPM4-8B.

- MiniCPM4-8B-Eagle-FRSpec-QAT-cpmcu: Eagle head trained with QAT for FRSpec, efficiently integrate speculation and quantization to achieve ultra acceleration for MiniCPM4-8B.

- MiniCPM4-8B-Eagle-vLLM: Eagle head in vLLM format, accelerating speculative inference for MiniCPM4-8B.

- MiniCPM4-8B-marlin-Eagle-vLLM: Quantized Eagle head for vLLM format, accelerating speculative inference for MiniCPM4-8B.

- BitCPM4-0.5B: Extreme ternary quantization applied to MiniCPM4-0.5B compresses model parameters into ternary values, achieving a 90% reduction in bit width.

- BitCPM4-1B: Extreme ternary quantization applied to MiniCPM3-1B compresses model parameters into ternary values, achieving a 90% reduction in bit width.

- MiniCPM4-Survey: Based on MiniCPM4-8B, accepts users' quiries as input and autonomously generate trustworthy, long-form survey papers.

- MiniCPM4-MCP: Based on MiniCPM4-8B, accepts users' queries and available MCP tools as input and autonomously calls relevant MCP tools to satisfy users' requirements.

- BitCPM4-0.5B-GGUF: GGUF version of BitCPM4-0.5B. (<-- you are here)

- BitCPM4-1B-GGUF: GGUF version of BitCPM4-1B.

Introduction

BitCPM4 are ternary quantized models derived from the MiniCPM series models through quantization-aware training (QAT), achieving significant improvements in both training efficiency and model parameter efficiency.

- Improvements of the training method

- Searching hyperparameters with a wind-tunnel on a small model.

- Using a two-stage training method: training in high-precision first and then QAT, making the best of the trained high-precision models and significantly reducing the computational resources required for the QAT phase.

- High parameter efficiency

- Achieving comparable performance to full-precision models of similar parameter models with a bit width of only 1.58 bits, demonstrating high parameter efficiency.

Usage

Inference with llama.cpp

./llama-cli -c 1024 -m BitCPM4-0.5B-q4_0.gguf -n 1024 --top-p 0.7 --temp 0.7 --prompt "请写一篇关于人工智能的文章,详细介绍人工智能的未来发展和隐患。"

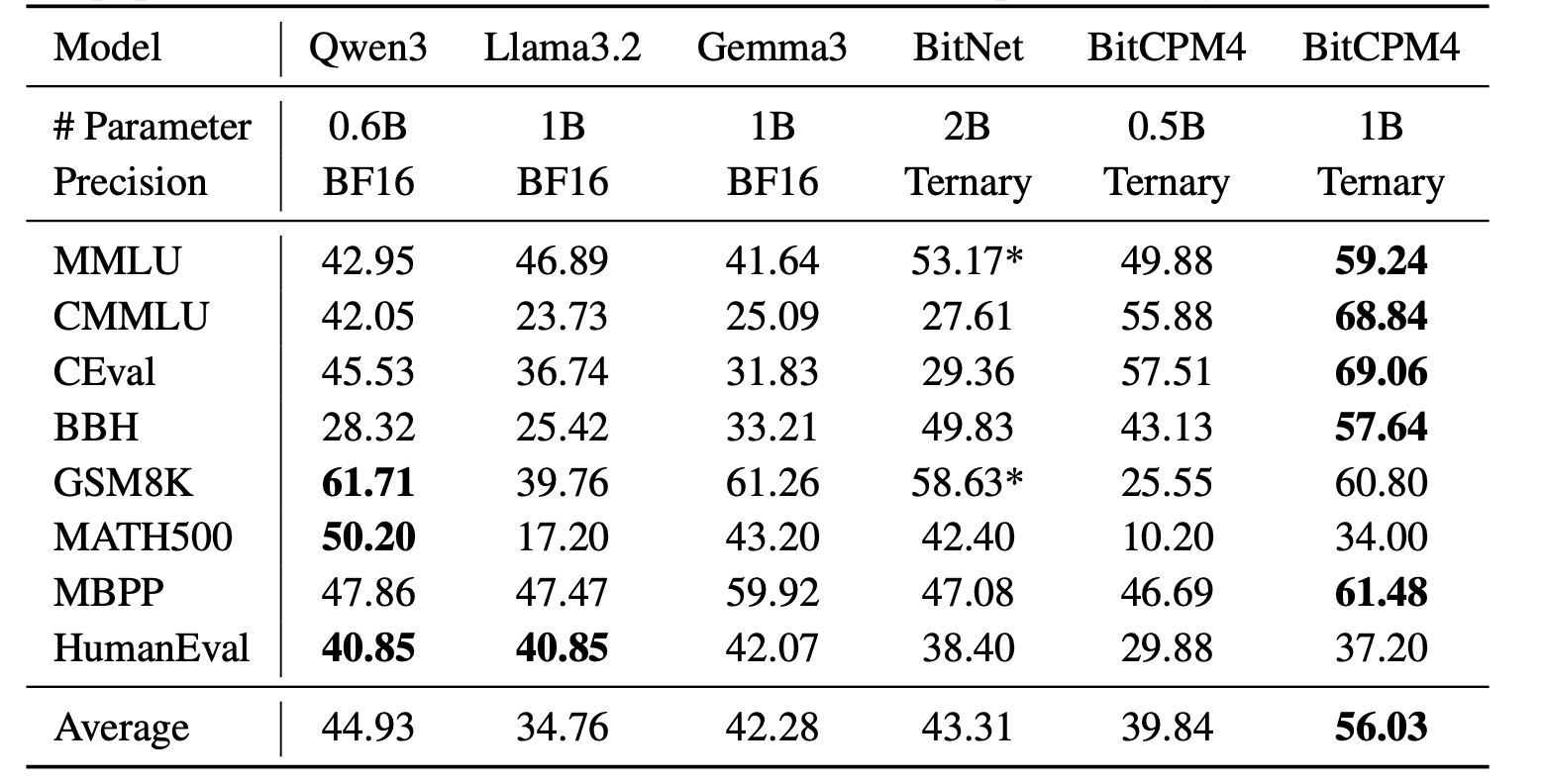

Evaluation Results

BitCPM4's performance is comparable with other full-precision models in same model size.

Statement

- As a language model, MiniCPM generates content by learning from a vast amount of text.

- However, it does not possess the ability to comprehend or express personal opinions or value judgments.

- Any content generated by MiniCPM does not represent the viewpoints or positions of the model developers.

- Therefore, when using content generated by MiniCPM, users should take full responsibility for evaluating and verifying it on their own.

LICENSE

- This repository and MiniCPM models are released under the Apache-2.0 License.

Citation

- Please cite our paper if you find our work valuable.

@article{minicpm4,

title={{MiniCPM4}: Ultra-Efficient LLMs on End Devices},

author={MiniCPM Team},

year={2025}

}

- Downloads last month

- 48

2-bit

4-bit